"Le Guin was a visionary who wrote a really deep and literary novel about gender and sexuality and how much of it is a social construct or whatever": I sleep

"Le Guin was an Antarctica fangirl who had opinions about the 1980s TV series about Shackleton and Scott and wrote a story about two guys on a slightly homoerotic eighty-one day sledge trek": REAL SHIT

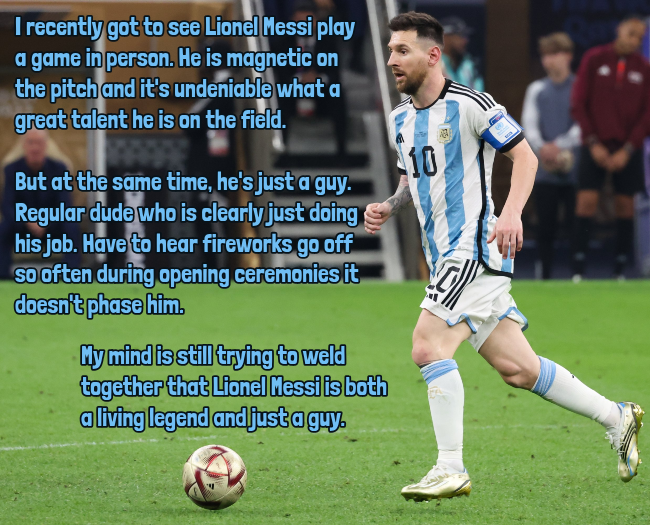

Premise: Genly Ai is the ambassador from the Ekumen (alliance of thousands of societies across eighty-plus planets) to the planet of Gethen, aka "Winter" for its frigid weather. He starts off in the country of Karhide, which seems like a comparatively backwards monarchy; the prime minister, Harth rem ir Estraven, says "Karhide is not a nation but a family quarrel." After meeting with no success in Karhide after two years--and after Estraven gets fired and exiled for supporting him--Ai tries again in neighboring Orgoreyn, which is more of a sprawling bureaucracy with guaranteed employment for everyone and heated rooms. Maybe more promising? Nope, they send him to be interned and abused by the secret police. Eventually Estraven rescues him; there's a lot of culture shock and miscommunication, but Ai finally comes to believe that Estraven really does believe in the cosmopolitan mission of the Ekumen in contrast to smallminded nationalism.

Okay, so what about the sex stuff. Gethenians are sexless most of the time; for a few days every month, during their reproductive years, they go into "kemmer," and develop sex organs, with a random chance of being male or female on any given occasion. This is accompanied by an intense physical drive to reproduce, so they partner up with someone else in kemmer. (At least in this book, though maybe not in the spinoff stories, all of the couplings are male-female.) If the female partner gets pregnant, those sex characteristics persist through the pregnancy and gestation period, otherwise both parties become androgynous again for the next month.

Consider: There is no unconsenting sex, no rape. As with most mammals other than man, coitus can be performed only by mutual invitation and consent; otherwise it is not possible. Seduction certainly is possible, but it must have to be awfully well timed.

Consider: There is no division of humanity into strong and weak halves, protective/protected, dominant/submissive, owner/chattel, active/passive. In fact the whole tendency to dualism that pervades human thinking may be found to be lessened, or changed, on Winter.

...They do not see each other as men or women. This is almost impossible for our imagination to accept. What is the first question we ask about a newborn baby?

I'm unconvinced! Humans have a long track record of finding ways to oppress each other that have no grounding in scientific fact; I usually see "owner/chattel" language referencing racist slavery systems. I don't see why similar bigotry wouldn't exist in a place like Gethen. While Gethen has small-scale skirmishes, assassinations, secret police brutality, etc., they've never actually had an all-out war, which Ai seems to think is related to the "no rape, no subjugation" system. And while we often talk about babies as "is it a boy or a girl," we also often see birth announcements with babies' height and weight, which is really not at all something we do with adults. It's because they don't have language or personality traits or anything to communicate with us yet that we go with vital stats instead.

But where it really didn't feel as radical as advertised/feared is that all the chapters (even the ones that aren't directly narrated by Ai) use "he," "man," "brother," etc. as default. Even the spaceships are "she"!

"...it is not human to be without shame and without desire."

"I suppose the most important thing, the heaviest single factor in one's life, is whether one's born male or female. In most societies it determines one's expectations, outlook, ethics, manners--almost everything...[women] don't often seem to turn up mathematicians, or composers of music, or inventors, or abstract thinkers."

The Ekumen have instantaneous interplanetary communication, and telepathic language that makes lying impossible. At times it seems utopian, although there was a war a couple centuries ago. I really don't believe that social stereotypes about what roles men and women should play would continue to be this pervasive across thousands of cultures.

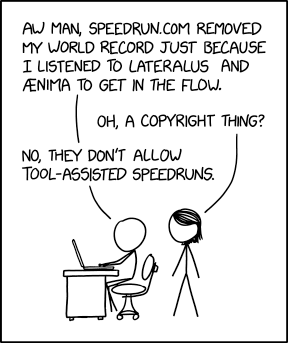

"The Left Hand of Darkness" was written in 1969. By 1983 we get Douglas Hofstadter's "A Person Paper on Purity in English," which goes disturbingly far in making the point that using 'he' as default is kinda messed up. A couple years later (1985), Hofstadter writes:

My feeling about nonsexist English is that it is like a foreign language that I am learning. I find that even after years of practice, I still have to translate sometimes from my native language, which is sexist English. I know of no human being who speaks Nonsexist as their native tongue. It will be very interesting to see if such people come to exist. If so, it will have taken a lot of work by a lot of people to reach that point.

For me, reading this in the 21st century, it feels really bizarre--I think my native dialect is much closer to Nonsexist English than Hofstadter could have predicted. The way I generally talk about people I don't know, or only know as streams of text coming through a computer screen, is as singular they: "whoever wrote this is an idiot and they should be fired." (This usage has a very long history in English; I draw a distinction between this and situations where a specific person requests to be referred to as singular they consistently, but some people will lump these in as the same thing.)

Apparently Le Guin was responsive to this criticism and changed the way she handled Gethen in later stories, but I can only judge it on what's in front of me, and the use of "he," to me, says a lot more about the world of 1969 than the world of Winter. (I'm going to use "he," "brother," etc. for the rest of this review, but take this with a grain of salt.)

Anyway, obviously there are a lot of taboos from our world that don't translate into Gethen society. Siblings are allowed to kemmer together, but they can't vow a monogamous relationship--after one of them has a child, that's it, they have to break up.

( spoilers )Okay, now for the fun part, the sledging!

"What for?"

"Curiosity, adventure." He hesitated and smiled slightly. "The augmentation of the complexity and intensity of the field of intelligent life," he said, quoting one of my Ekumenical quotations.

I am not trying to say that I was happy, during those weeks of hauling a sledge across an ice-sheet in the dead of winter. I was hungry, overstrained, and often anxious, and it all got worse the longer it went on. I certainly wasn't happy. Happiness has to do with reason, and only reason earns it. What I was given was the thing you can't earn, and can't keep, and often don't even recognize at the time; I mean joy.

If I were to project this onto my Antarctica faves (ignore this part if you don't know or care who these people are): Ai is more in the role of Cherry-Garrard, who at first feels less able to cope with the physical demands of sledging, but as the survivor, is responsible for putting together his recollections in the past tense, blending the perspective of what he felt at the time and what he has learned since. Estraven is a combination of Bowers (shorter but surprisingly durable, incredible grasp of logistics and food supply, which is necessary for winter travel) and Wilson (insists on routine and patience, even when it drives Ai up the wall):

The business of setting up camp, making everything secure, getting all the clinging snow off one's outer clothing, and so on, was trying. Sometimes it did not seem worthwhile. It was so late, so cold, one was so tired, that it would be much easier to lie down in a sleeping-bag in the lee of the sledge and not bother with the tent. I remember how clear this was ot me on certain evenings, and how bitterly I resented my companion's methodical, tyrannical insistence that we do everything and do it correctly and thoroughly. I hated him at such times, with a hatred that rose straight up out of the death that lay within my spirit. I hated the harsh, intricate, obstinate demands that he made on me in the name of life.

Estraven also keeps a journal of the trek, to keep in touch with his family back home. Oftentimes this is little more than the date and reports on temperature. Ai teaches him mindspeech, but he's careful not to let any hint of that slip into the journal, and so it's clear that we're getting different points of view on the same event. Again, the contrast between "one party's recollection after the fact" and "people's real-time chronicles, which are probably brief and to the point because of the weather," is very much in the spirit of polar narratives.

I don't want to push this too far, but I think that the contrast between the nationalistic goals of the Karhide and Orgoreyn factions, and Ai's mission, which eventually becomes Estraven's, being both universal with the Ekumen and an intensely personal relationship, probably is making a broader point about exploration in our world.

Likewise, one of my favorite quotes from last year's bingo was in

Le Guin's "Paradises Lost":History must be what we have escaped from. It is what we were, not what we are. History is what we need never do again.

If it's not already obvious, I have been feeling a lot of emotions about Antarctica in the past few months or so, and in particular, I do think it's important that there is one place in the world that has nothing in the way of "History" with a capital H--warfare and oppression and suchlike--but does have a track record of science and exploration and friendship and narratives. Maybe this distinction is shallow or doesn't matter to other people. But I keep thinking of that quote, even though I know perfectly well it has nothing to do with Antarctica per se. Having read this book, I feel a little better about that connection; maybe Le Guin wouldn't think I'm crazy for it. :)

Bingo: I think the safest/most obvious connection is Politics. For various stretches of the squares, I think there are cases to be made for Unusual Transportation (sledge hauling), Vacation Spot (if you're an Antarctica nerd), Explorers/Rangers, First Contact (there were stealth observers sent to Gethen before, but Ai is the first to proclaim himself as an alien). I also think there's a case to be made that it should be eligible for exactly one of "Trans or Nonbinary Protagonist" or "Non-Human Protagonist," but it's in a quantum state of superposition and you can't determine which is which for most of the month...